What I learned from reading Gartner's Light IGA report 2026

Key Takeaways

Gartner's 2026 Light IGA research recognizes the shift toward AI-native identity governance as a present reality, not a future state. Opti was included in that research months after launch.

The governance gap most organizations face is architectural, not accidental. Legacy platforms were built for a world that no longer exists and cannot be fixed with more configuration on top of the wrong foundation.

AI-native governance is a fundamentally different model. Not a better version of legacy IGA but a different relationship between a platform and the environment it governs - continuous, adaptive, and real-time rather than periodic and manual.

Three questions reveal whether your current setup is built for what is coming: do your access reviews actually change outcomes, is your role model current, and can your compliance program enforce SOD across your entire application estate.

AI agents are the next identity governance frontier. Most organizations already have them operating with ungoverned access. Gartner names them as a distinct identity type with their own governance requirements.

The organizations that understand this shift clearly and act on it early will be significantly ahead of those that wait for the market to force the decision.

A few months ago, Opti launched.

This month, Gartner included us in their 2026 Light IGA research.

I want to be direct about what that means and why I think it matters beyond recognition itself. Gartner did not include us because we have been around the longest or because we have the largest customer base. They included us in the context of a shift they are tracking across the entire identity governance market: the movement away from legacy IGA architecture toward AI-native approaches that are fundamentally different in how they work.

That shift is the real story in this report. And it is worth understanding clearly, because it changes how IAM teams should be thinking about every decision they make in this space right now.

What the report is and why it exists

Light IGA is the faster, leaner version of identity governance. Instead of a multi-year, multi-million-dollar implementation, you get a platform that deploys in days, covers the expected IAM use cases, and often comes bundled with tools your organization already owns.

Adoption has accelerated significantly. The appeal is obvious: lower cost, faster time to value, and less burden on already-stretched IAM teams. Gartner notes that nearly half of organizations are not adequately staffed or funded for large IAM modernization efforts. Light IGA exists to serve exactly that reality.

But the report exists because something more fundamental is happening beneath the surface of that adoption story. The legacy IGA model, which dominated this space for nearly two decades, was built for a world that no longer exists for most organizations. On-premises infrastructure. Stable application estates. A sole focus on human identities. Large dedicated IAM teams. Multi-year implementation cycles were considered normal and acceptable.

That world is gone. And the governance programs built for it are increasingly showing their limits in ways that are difficult to paper over with more configuration or more consultants.

Gartner's report is a decision framework for teams figuring out what to do next. Not just which vendor to choose, but which model of identity governance is actually built for where their organization is going.

The finding that stopped me: the gap is architectural, not accidental

This is the insight I keep coming back to.

The organizations struggling most with identity governance are not struggling because of execution failures. They are struggling because the platforms they invested in were designed for a different architectural reality.

Legacy IGA platforms were built around a specific assumption: that your application estate was relatively stable, largely predictable, and could be fully modeled in advance. Implementation teams would spend months mapping integrations, building connectors, and configuring workflows before anything went live. Once it was live, someone had to maintain it manually as the environment changed.

That assumption does not hold for most organizations today. Application use is dynamic. Cloud-native infrastructure changes constantly. New tools get adopted faster than any implementation timeline can accommodate. The workforce using those tools looks nothing like the workforce those platforms were designed to govern.

The result is a governance gap that grows quietly. Not because anyone made a bad decision. Because the platform was never designed to keep up with the pace at which modern organizations change.

This is not a configuration problem. It is an architectural one. And that distinction matters enormously, because you cannot fix an architectural problem with more effort on top of the wrong foundation.

What AI-native governance actually means in practice

This is the part of the report I find most significant, and the part that I think most commentary on it misses.

The shift toward AI-native identity governance is not about adding a machine learning module to a legacy platform. It is not about a chatbot for access requests or a dashboard with better visualizations. Those are features. What Gartner is tracking is something deeper: a fundamentally different approach to how governance works.

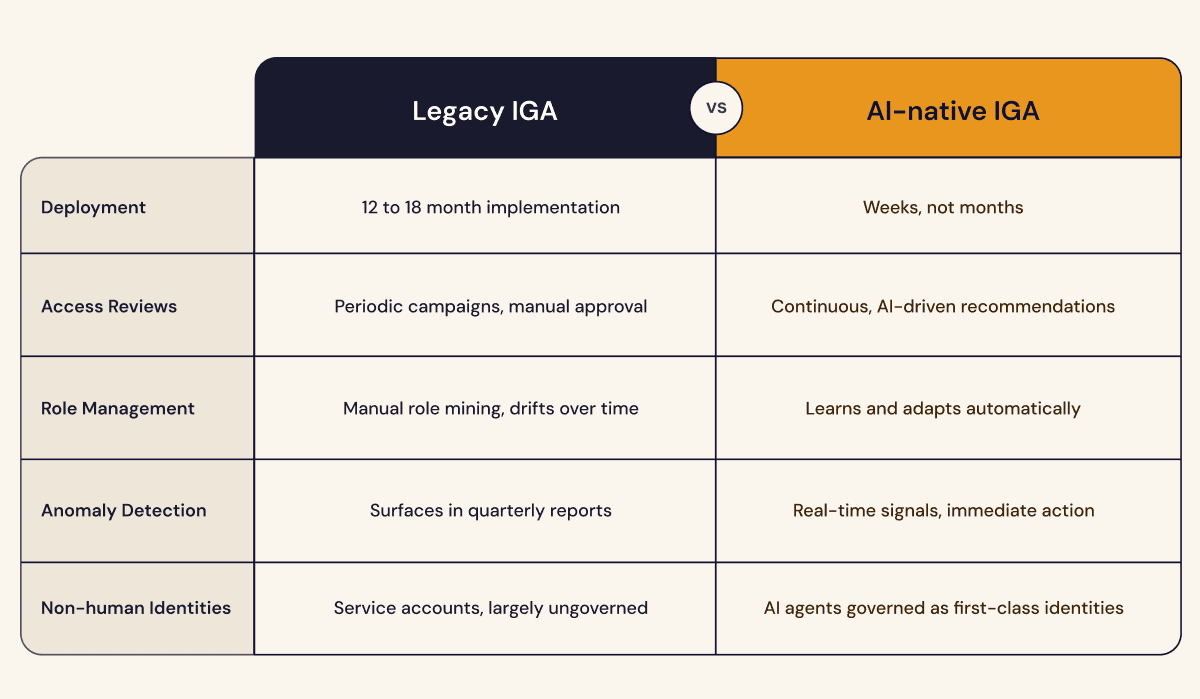

Legacy governance is built around configuration and periodic intervention. You define rules. You build workflows. You run campaigns on a schedule. You respond to what the platform surfaces when someone reviews a report. The system executes what you told it to do, and when your environment changes, someone has to go back in and reconfigure it to match.

AI-native governance is built around continuous learning and real-time adaptation. Just like your identity specialists today, the platform comes with a vast array of IAM process knowledge and develops an understanding of your specific environment on top. It learns what normal looks like, identifies what is anomalous, and surfaces the right information to the right person at the right moment without waiting for a scheduled campaign to trigger it. Governance becomes something that happens continuously rather than periodically.

The practical difference shows up in several specific ways.

Access reviews stop being a calendar event and start being an ongoing process. Instead of a manager receiving a list of 200 access items to approve once a quarter, an AI-native platform surfaces the three or four access decisions that actually need human judgment right now, with the context needed to make a real decision rather than a default approval. The reviewer is not fighting certification fatigue. They are actually governing.

Role management and realignment stops being a project and start being a continuous process. An AI-native platform does not just assign roles. It monitors how access is actually being used, identifies patterns that suggest a role is no longer accurate, and updates its understanding of what roles should look like without requiring a dedicated role mining exercise to kick off the process.

Identity risk elimination stops being a one-time discovery exercise and becomes a routine in your environment. As applications change, as permissions evolve, as new entitlements are created, the platform correlates the dynamic enterprise landscape, surfaces it immediately, and suggests concrete mitigation steps.

This is not a marginal improvement on the legacy model. It is a different relationship between a governance and security platform and the environment it governs. One that is built to keep pace with how organizations actually change rather than requiring organizations to slow down to match what the platform can handle.

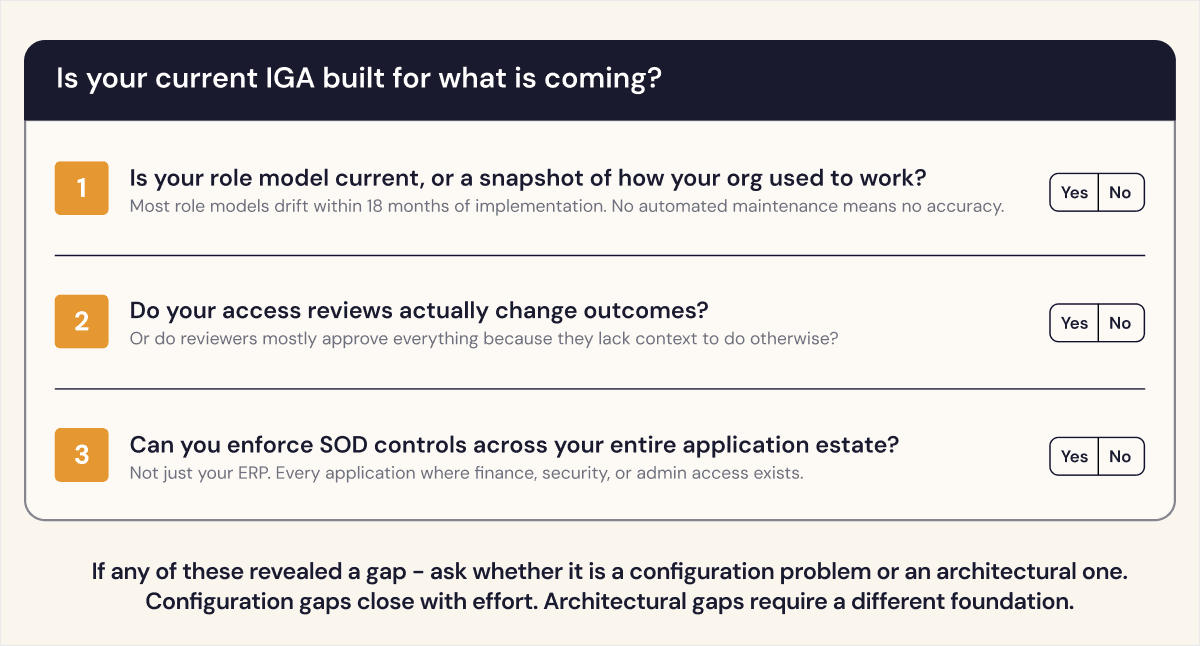

Three questions that reveal whether your current setup is built for this

Understanding the shift toward AI-native governance is useful. Knowing where your own program stands relative to it is more useful.

These three questions are the most practical diagnostic I found in Gartner's report. They are not about which vendor you are running. They are about whether the architectural foundation you are on can deliver what modern governance requires.

Is your role model current, or is it a snapshot of how your organization used to work?

“We have more roles than we have employees”. Does that sound familiar? Role models drift. People change jobs. Teams reorganize. Applications evolve. Most organizations that have been running IGA for more than two years have a role model that was accurate at implementation and has been slowly diverging from reality ever since.

The problem is not that organizations do not want accurate role models. The problem is that keeping a role model current requires continuous effort that consistently gets deprioritized against more urgent work. An AI-native platform does not treat role management as a project. It treats it as a continuous process, monitoring usage patterns and updating its understanding of what roles should look like, exactly like a human would do, without waiting for someone to schedule a role update project.

Do your access reviews actually change outcomes?

This is the most honest version of the access review question. Not: Do you run access reviews? Not: Do you generate audit artifacts? But: when your reviewers complete a certification campaign, does access actually change in ways that reflect genuine governance decisions?

If the answer is that reviewers mostly click approve because they lack the context to do anything else, you have a process that satisfies a compliance requirement on paper but does not deliver governance in practice. AI-native platforms change this by bringing intelligence to the review: usage data, anomaly signals, peer group comparisons, and risk scoring. The reviewer sees a recommendation with reasoning. The default is no longer automatic approval.

Can your compliance program enforce SOD controls across your entire application estate?

Segregation of duties controls are only meaningful if they actually reach all the applications in scope. A SOD policy that covers your ERP but not the twelve other systems where finance team members have access is not a SOD policy. It is a partial control with significant blind spots.

Enforcing SOD across a distributed application estate requires centralized policy management with enforcement that reaches consistently across every application in scope. This is one of the places where legacy architecture shows its limits most clearly, and one of the places where AI-native governance delivers the most immediate practical value.

If any of those three questions revealed a gap, the important thing to understand is what kind of gap it is. A configuration gap can be closed with effort and the right settings. An architectural gap cannot. And the honest answer for most legacy IGA deployments is that the gaps these questions reveal are architectural.

The part of the report most teams are not thinking about yet: AI agents as identities

There is a section of Gartner's report that I think will age better than almost anything else in it.

Near the beginning, Gartner names a new identity constituency that most governance programs have not yet accounted for: AI agents. Not AI as a tool for governance. AI agents as identities that themselves need to be governed.

The language is precise. Gartner describes AI agents as a distinct workload application identity constituency with their own life cycle and governance requirements. They are not treated as a feature or an add-on to the human identity problem. They are treated as a parallel track that every mature governance program will eventually need to cover.

Think about what that means in practice. Your organization likely already has AI agents operating in your environment. Automated workflows. AI-powered tools that connect to your systems, read data, take actions, and make decisions. Each of those agents has access to something. And in most organizations, that access was granted informally, has never been reviewed, and has no offboarding process attached to it.

That is not a hypothetical future risk. It is the current state of most enterprise environments.

The pattern is the same one we saw with service accounts a decade ago. First, organizations deploy them informally because they solve an immediate problem. Then they proliferate. Then someone realizes there are thousands of them with privileged access and no governance trail. Then the cleanup begins.

AI agents are at the beginning of that curve right now. The organizations that build governance for AI agents into their identity programs today, rather than retrofitting it later, will be significantly ahead of those that wait.

AI-native identity governance means governing all the identities in your environment. Human and non-human. That is what the shift Gartner is tracking points toward, actually, and it is the version of this problem that will define the next decade of IAM.

What to do if this resonates

Three things worth doing after reading this, in order.

First, run the three questions above against your current environment honestly. Not based on what your platform was supposed to deliver when you implemented it. Based on what it actually delivers today.

Second, if any of those questions revealed a gap, spend time understanding whether it is a configuration gap or an architectural one. That distinction determines whether more effort on your current platform is the right answer or whether the shift Gartner is tracking is the more honest path forward.

Third, if you want a structured way to do that assessment, we built the AI-Native IGA Readiness Guide for exactly this purpose. It walks through a broader diagnostic in a format you can use in internal conversations and planning sessions. It is free and takes about 20 minutes.

The shift Gartner is tracking is already happening. The organizations that understand it clearly are the ones that will be ahead of it.

Barak, CEO and CO-founder of Opti, is a cybersecurity innovator with over 20 years of hands-on experience leading strategy, building products, and protecting critical infrastructures. He co-founded Indegy and served as its CEO until its acquisition by Tenable in 2019 where he served as VP. Earlier, he led product design at Stratoscale and managed large-scale cybersecurity projects in the Israel Defense Forces. Barak holds a B.Sc. in Computer Science and Mathematics and an MBA from Tel Aviv University.